|

In either case, the easiest method to start something at boot is via /etc/rc.local, which is executed by the init system. Note that the current init system is actually systemd, but a lot of documentation refers to the older SysV style init. The first non-kernel code executed on POSIX style operating systems such as Raspbian is init, and everything else descends from this. Logically enough, it's the other way around: There must be appropriate software running first in order for anyone to log in. Arducam’s latest Raspberry Pi camera module, Hawk-eye, is now available for pre-order, somehow cramming 64 megapixels into a sensor measuring just 7.4mm x 5.55mm. You can enable auto-login using raspi-config on Pi based operating systems such as Raspbian.Įxecution of code at boot does not require anyone logged in. Unless you are using it in a very unusual way, an Arduino does not require a login or possess the potential for such, etc., because it does not run an operating system.

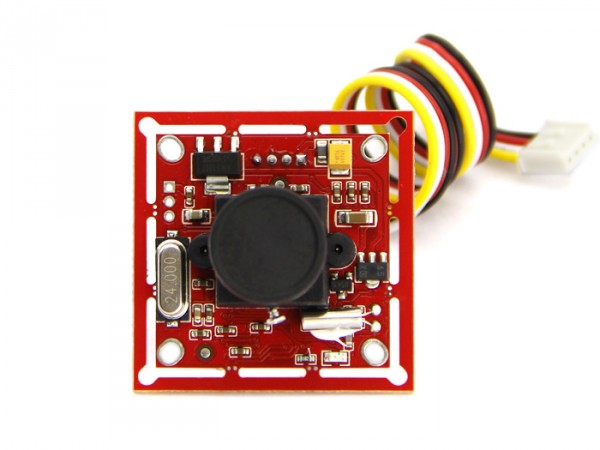

148 Arduino, 119, 120 operational schematic, 121 Raspberry Pi. The Pi is a considerably more complex piece of hardware. F Force/pressure Arduino, 61 calibration curve, 60 data analysis, 65 measurement. You can't use it exactly like an Arduino, or at least not easily, and it would not be very desirable to do so. Typical application areas are obviously flying drones, subsea ROVs and autonomous land based vehicles.How can I use Raspberry Pi standalone which works just like Arduino? Both camera systems have integrated autofocus lenses so the cost is limited to just the Stereo package and the Raspberry Pi. There are currently two versions available with 5mp or 8mp sensors. However, Arducam, the innovative embedded camera manufacturer have designed a fully synchronised stereo vision HAT (Hardware on Top) for the Raspberry Pi. Focal Length: 2.8 mm Angle of View: 77.6 degrees (diagonal) Shutter Type: Rolling Shutter. 1/4 8MP IMX219 sensor for sharp images, Max.

See a typical Scorpion 3D Vision application for a great example.įor a real time depth sensing application (autonomous vehicles are an obvious benefactor) we also need synchronised images from the two sensors - we know from talking to our customers that this is one of the challenges even before they create the code that extracts the depth measurement.įor simple depth sensing using machine vision, it can be expensive and time consuming setting up dual cameras, triggering both cameras simultaneously and processing the image data on the host computer.Īlso, perhaps this is something you would normally only expect to see on an Intel or Nvidia powered computer platform. Autofocus lens with an angle of 77.6 (D) and a focal length of 2.8mm. It attaches to Raspberry Pi by one of the two small sockets on the upper side of.

If we can add a measurement in the Z axis, we now have a 3D system which opens many additional possibilities over and above the benefit of depth sensing (distance from cameras to target). Raspberry Pi Camera Module V2 is add-on for Raspberry Pi 3, 2, B+ and A+. So, as we know, cameras in a stereo vision configuration can be used for depth sensing or height measurement. For robot with camera autonomous driving project I planned originally using Due for motor control and Arduino PID library, and Pi (Zero) with camera as video processing subsystem. In the world of machine vision, we talk about X and Y for locating an object in 2D but we can get Z with stereo cameras.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed